Challenge

Companies dispose of enormous amounts of data, ranging from operational data over financial data, to benchmarks, social media data, … This data is then transformed into comprehensive reports to inform strategic decision-making processes. To ensure the accuracy and reliability that is expected of these reports, we must first ensure that the data itself is correct, complete, and in the right format. However, in reality, the integrated data in many data warehouses is of bad quality. This is mainly due to release problems as a consequence of poor quality of deliver, corrupt data, … The result is that the data becomes unusable. Consequently, decisions based on this poor quality data will likely be flawed and may even result in financial losses.

Solution

In order for the integrated data in data warehouses to be reliable and accurate, the data needs to be tested. You need to know whether the data itself is correct, complete and in the right format. The testing process is usually largely manual. This has several disadvantages:

- the testing process is notoriously difficult due to the tremendous scale of ingested data. This makes comprehensive manual testing practically impossible, which leads to weakened data integrity and a higher risk of bugs slipping into production;

- manual testing is error-prone, and a laborious and time-consuming process;

- teams that rely primarily on manual testing ultimately end up deferring testing until dedicated testing periods, which allows bugs to accumulate;

- manual testing is not sufficiently repeatable for regression testing;

- manual tests may not be effective in finding certain classes of defects.

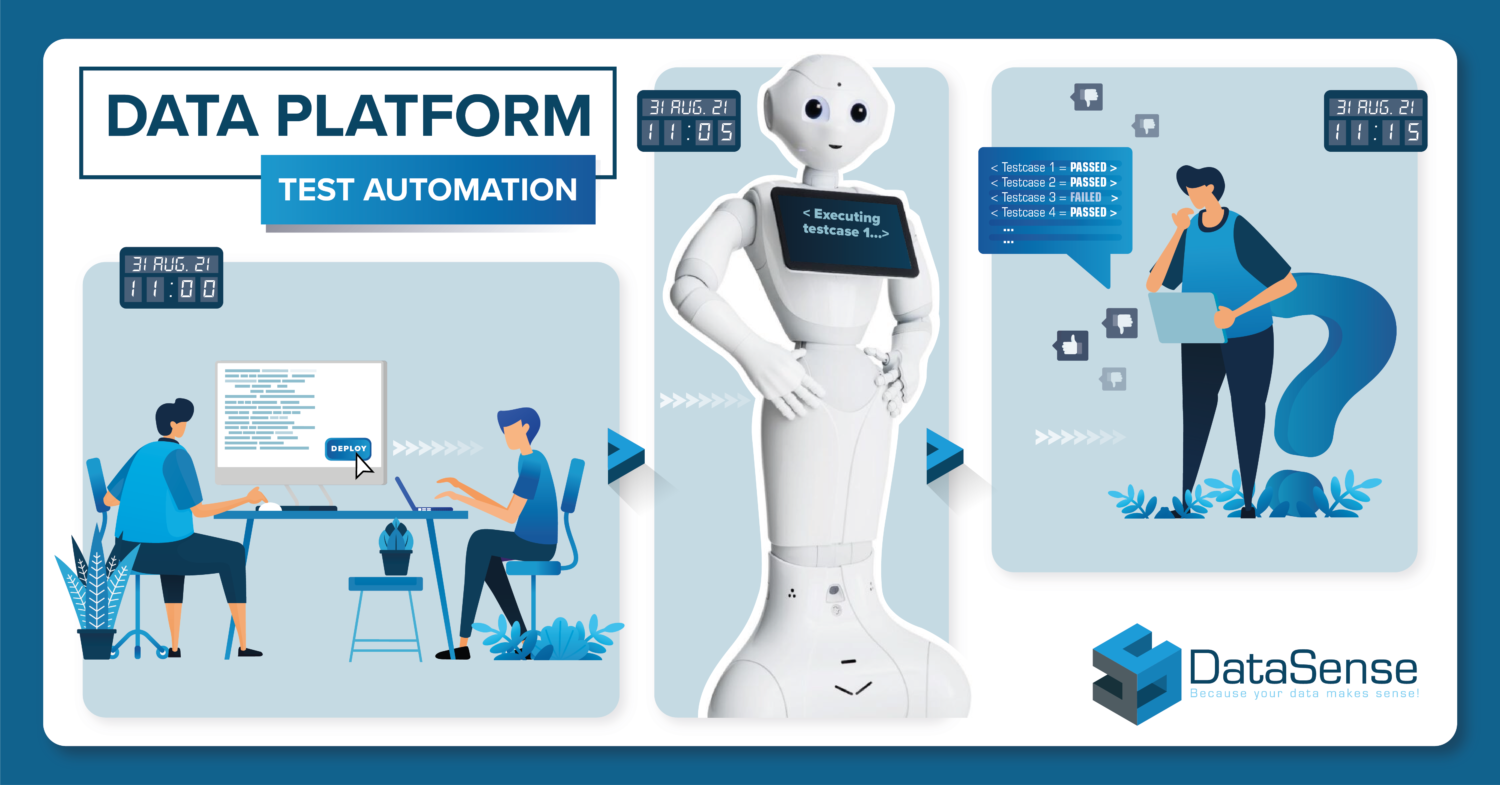

To counter these disadvantages, we use the Robot Framework for automated data platform testing. An automated testing approach is preferable to a manual one, since it is much more time-efficient and cost-effective. Furthermore, this approach ensures a quick testing process, allowing for a timely detection of poor quality of the data warehouse. The Robot Framework also makes it also possible to easily repeat the tests automatically and ensures that data delivery defects are avoided in future processes. As a result, companies can trust their reports to be reliable to inform their decision-making processes.

Robot Framework

The Robot Framework is a keyword-driven open source test automation framework that allows you to choose from a set of standard functions that will give you the possibility to create your first testcases. If these functions are not what you need, the Robot Framework gives you the possibility to create your own functions in Python. These functions enable you to minimize the number of checks to see if all data entities have the correct structure and to continuously verify the business rules in the data warehouse to assure quality data.

Data Vault 2.0 – repeatable patterns

As mentioned above manual testing is not sufficiently repeatable for regression testing. However, Data Vault 2.0 is based on repeatable patterns and a repeatable pattern can be automated. DataSense uses Vaultspeed to automate the data integration layer of the Data Hub. The data model and data pipelines will be generated by Vaultspeed. For the data integration layer we’ve created a set of reusable test cases for automated data warehouse testing via the Robot Framework. For this we implemented functions that will generate queries automatically.

Business specific rules

The data model designed for reports and dashboard is modeled based on business specific rules. The test scripts for this layer can’t be automated because they differ case by case.

They will be included in the Robot Framework and can be reused during the release and test cycle of the data platform.